Case Study

On-Demand Infrastructure Deployment and Configuration for Cybus QA Team

Industry

- Data Integration,

- Edge Computing

- Industrial Connectivity

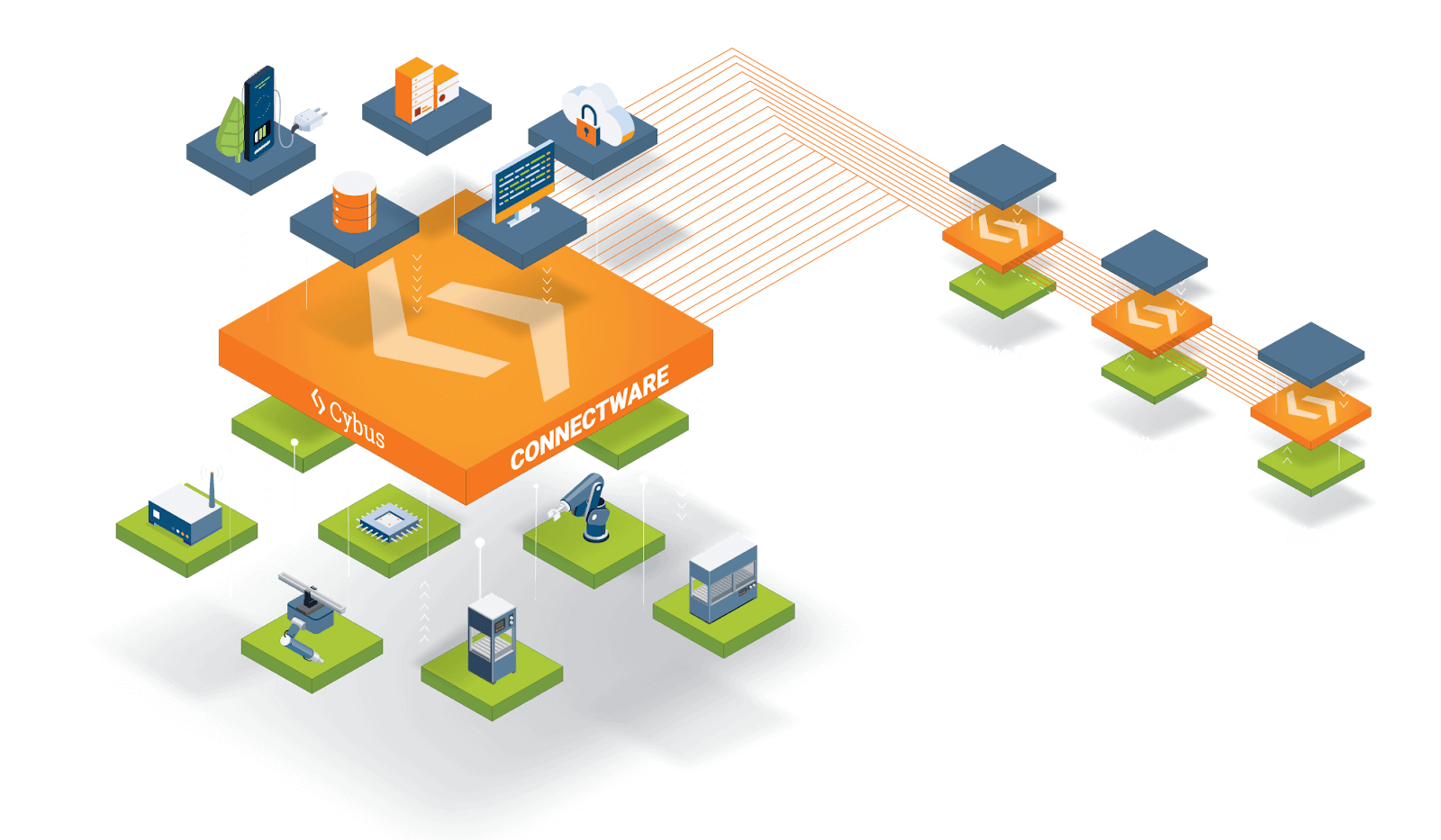

Headquartered in Germany, Cybus Connectware serves as a Factory Data Hub, connecting IoT and IT systems company-wide. Cybus empowers organizations to effortlessly collect, standardize, process, and distribute data, enabling diverse Industrial IoT use cases with a seamless global data flow across all facilities. The QA Team of Cybus needs to tests their applications on various platforms with different types of scenarios and versions.

To address the specific needs of the Cybus QA team, we developed a comprehensive solution for on-demand infrastructure deployment and configuration. This solution integrates infrastructure provisioning using Terraform, ensuring consistent and scalable resource allocation. After deployment, environments are configured using Ansible or Helm, and applications are running on Docker (EC2 Deployments) or Pods (Kubernetes Deployments) making them ready for immediate testing.

For monitoring, we implemented Loki, which provides real-time log tracking for each deployment, allowing the QA team to quickly identify and resolve issues. Additionally, real-time monitoring API ensures visibility throughout the deployment process, enabling prompt detection and response to any potential problems. To optimize resource usage, an auto-cleaner job was introduced to automatically detect and clean up inactive or “zombie” deployments, preventing unnecessary resource consumption.

This integrated approach has significantly improved the Cybus QA team’s ability to deploy, manage, and monitor envi IPronments efficiently, leading to faster Iioud Services: AWS (S3, EC2, EKS, VPN)

Technologies Used

Terraform

Ansible

Docker

Git

Fluent

Prometheus

Grafana

cAdvisor

Loki

Challenges & Solutions

Handling Large Scale Infrastructure Provisioning

Problem

Solutions

Handling Large Infrastructure Configuration

Problem

Solution

Handling Large Infrastructure Configuration

problem

Solution

Keeping The Software Updated

Problem

Solution

Log And Docker Metrics Monitoring For Each Deployment

Problem

Solution

Concurrent On Demand deployment Requests

Problem

Solution

Handling Failed or Zombie Deployments:

Problem

Solution

Handling Concurrent Git Pull for Deployment Requests

Problem

Solution

Real-Time Deployment Log Streaming of Our Solution:

Problem

Solution

Security

Problem

Solution

Team Involvement

| Resources | Count |

|---|---|

| Backend Developers | 2 |

| Product Manager | 1 |

Core Features of the Software

Infrastructure Deployment

Configuration Management

Log Monitoring

Real-Time Deployment Monitoring

Auto-Cleaner Job

Slack Notifications

Development Timeline

Provide a high-level timeline of the project, including:

Design and Planning

1 months

Development

4 months

Testing and Quality Assurance

Iterative. There’s a testing phase after each sprint. The testing is done by both the Cybus QA team and us

Ready to transform your digital platform into a scalable, user-centric solution?